Model Performance

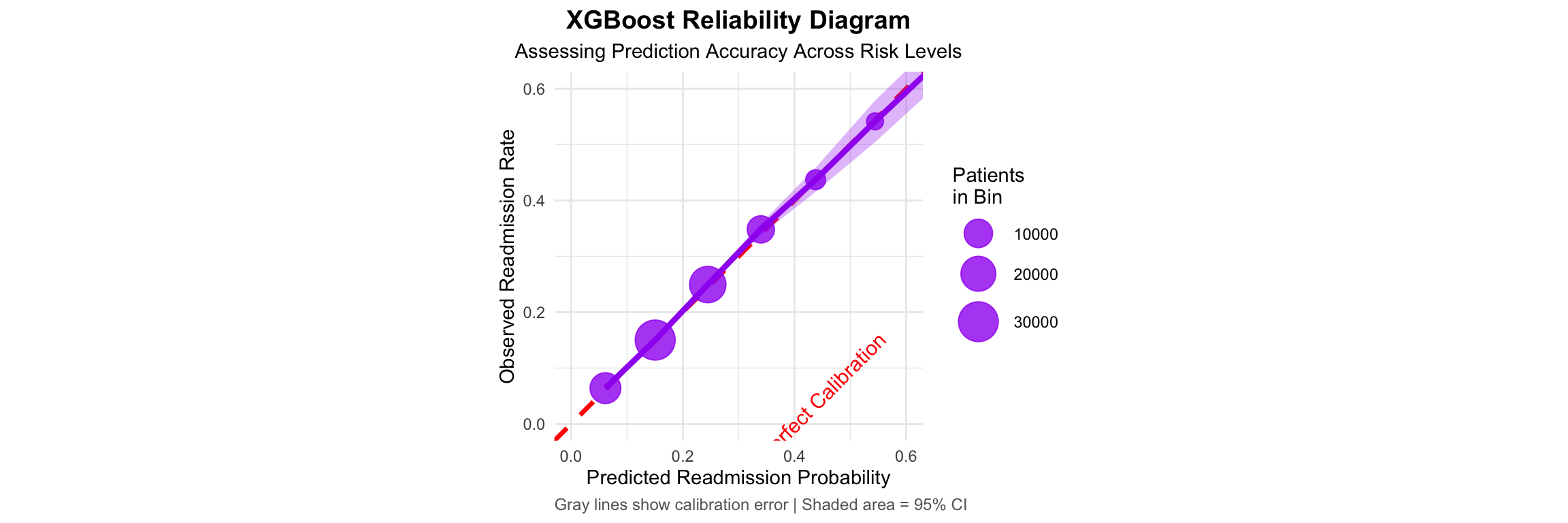

XGBoost emerged as the top performer with strong discrimination and excellent calibration

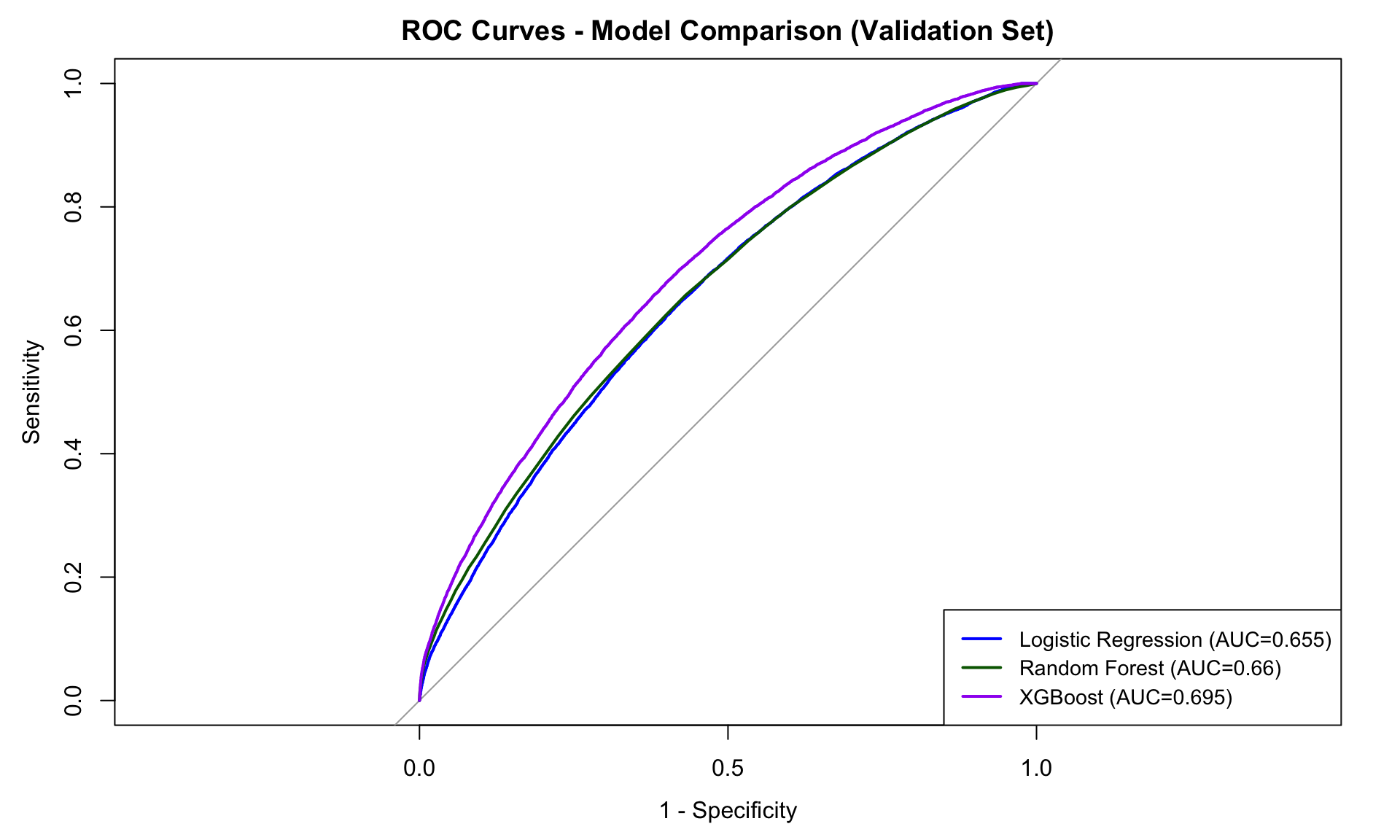

Model Comparison Results

| Model | AUC | Sensitivity | PPV |

|---|---|---|---|

| Logistic Regression | 0.655 | 64.2% | 28.5% |

| Random Forest | 0.660 | 65.1% | 29.2% |

| XGBoost | 0.683 | 68.8% | 29.8% |

Key Finding: The 29.8% PPV represents a 50% relative improvement over the 20% baseline readmission rate, enabling more efficient resource allocation.

Top Predictive Features

Well-Calibrated Model: ECE = 0.022, indicating trustworthy probability estimates